Lenny’s Little Apollo Book

Apollo Federation Patterns: Combining Data Sources

April 24, 2020

This post is part of a series about Apollo Federation architectural patterns. Check out the introduction for some background on how the example code is structured.

When Apollo released their federation libraries, this is the first pattern I tried. I was thrilled to discover that it worked exactly as I had hoped.

At Square, some of our data stores are huge and highly optimized. Certain access patterns, such as fetching an entity by ID, are insanely fast. However, these same optimizations make other access patterns either slow or impossible.

In this contrived example, I have a data store for “tweets”. At Twitter scale, you often can’t store all your data in a single database when you’re using a traditional RDBMS like MySQL or Postgres. Instead, you can break the single database into a series of “shards” that store a subset of the data. My simple example stores tweets in four different “databases” by converting the tweet ID into a number between 0 and 3 inclusive. This allows for performant tweet fetches when you already know the ID.

const DatabaseShards = [createConnection('db://db0'),createConnection('db://db1'),createConnection('db://db2'),createConnection('db://db2'),]const resolver = {Query: {tweet(_, { id }) {// Convert id to a number between 0 and 3 inclusive.const dbIndex = hash(id) % DatabaseShards.lengthconst db = DatabaseShards[dbIndex]// This is fast because the individual shards are small.return db.findTweet(id)},},}

However, I want to also search for tweets that contain a particular word. Searching each database shard and combining the results may be possible, but it’s almost certainly inefficient on large enough data sets. This is especially true if your database shards don’t have the proper indexes for this kind of query. (And creating new indexes on a database with 100s of gigabytes of data is expensive, both in the time it takes to migrate the database and the size of the index on disk!)

const resolvers = {Query: {search(_, { word }) {// Hit all four databases and combine the results??// What if there are 64 or 1024 databases?},},}

Instead, I have a second “database” indexed by word. In front of this index is a GraphQL API that returns an entity representation for the tweets that match the word.

const WordIndex = {apple: ['1', '2', '3'],banana: ['2', '4'],carrot: ['2', '3', '5'],}const resolvers = {Query: {search(_, { word }) {const tweetIds = WordIndex[word]return tweetIds.map((id) => {return { id }})},},}

Here’s where the query planner magic comes in: give these entity representations, the planner will make another request to the tweet service, which can efficiently fetch the rest of the tweet data by ID. Given this operation:

query TweetsContainingWord($word: String!) {search(word: $word) {idbodycreatedAt}}

The query planner generates this query plan. Note that the request to the

canonical tweets service is in a Flatten node—this tells you that the gateway

batches up the entity representations from the first request in a single call,

avoiding the N+1 query problem.

QueryPlan {Sequence {Fetch(service: "/sandbox/src/services/tweet-search.js") {{search(word: "everyday") {id__typename}}},Flatten(path: "search.@") {Fetch(service: "/sandbox/src/services/tweets.js") {{... on Tweet {__typenameid}} =>{... on Tweet {bodycreatedAt}}},},},}

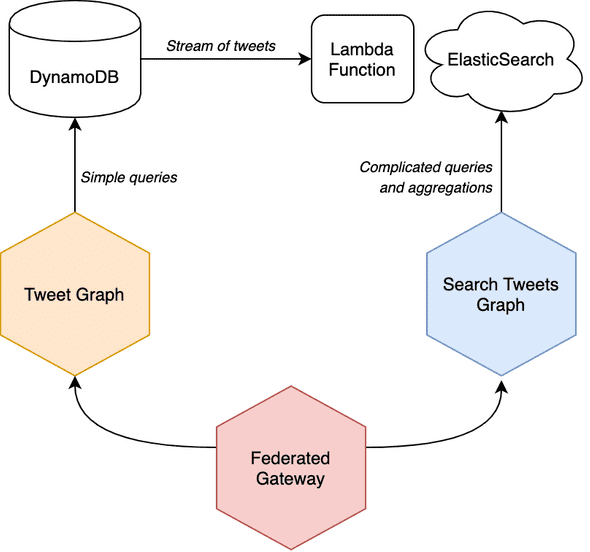

You could combine many kinds of data stores using this pattern. One architecture I’m interested in is using DynamoDB for simple access patterns, using DynamoDB Streams to replicate data into an ElasticSearch cluster, and using ElasticSearch for complex queries and aggregations.

One bonus feature of this pattern is that your “search” graph service can

provide fields beyond the ID by using the @provides directive.

For instance, if your search index also stored the tweet body and createdAt,

the query planner could simplify to plan to a single request:

type Tweet @key(fields: "id") {id: ID! @externalbody: String! @externalcreatedAt: DateTime! @external}type Query {search(word: String): [Tweet!]! @provides(fields: "body createdAt")}

It might be a good idea to denormalize certain fields and redundantly store them in your index service. This is especially true in “eventually consistent” systems. Suppose you allowed tweeets to be edited— consider this scenario:

- Someone tweets “hello world” and it’s stored in both the sharded database and the search index.

- The tweet is edited to say “goodbye world”.

- There’s a delay before the edit appears in the search index.

- Someone searches for tweets containing “hello”.

- The search index returns an id for the tweet because it’s still associated with “hello”.

- The user is confused because they searched for “hello” but received a tweet that says “goodbye world”.

Would it be that hard to write imperative code that fetches ids from a search index and subsequently fetches entities from another database? Definitely not. We have microservices that do just that. But Apollo Gateway makes the task of combining multiple sources of data into a single API so simple and easy to understand that I would reach for this architecture first in many situations.

Check out my CodeSandbox for this example to explore this powerful pattern.

Written by Lenny Burdette in San Francisco. You can follow him on twitter but he doesn't tweet. Opinions written here do not necessarily reflect those of his employers and are subject to change.